This is mostly me updating a guide I wrote a while back for GCN. This means this is mostly copy,paste and edit with the exception of including steps for Polaris and Vega.

Introduction

Following this http://www.tomshardware.com/reviews/msi-afterburner-undervolt-radeon-r9-fury,4425.html I have decided to write a guide on undervolting AMD GPU’s. This guide will cover the first and second generation of the GCN architecture, although it should apply to third gen too (Tonga and Fiji).

Following this http://www.tomshardware.com/reviews/msi-afterburner-undervolt-radeon-r9-fury,4425.html I have decided to write a guide on undervolting AMD GPU’s. This guide will cover the first and second generation of the GCN architecture, although it should apply to third gen too (Tonga and Fiji). I am making this guide for that one guy who is curious and find this post by Google.

Reason to undervolt:

- -reduced power consumption

- -reduced fan noise from both GPU and PSU

- -reduced load on PSU

- -reduced temperatures

- -provides a challenge by finding your cards absolute limits

Recommended Software Tools

For GCN 1/2

-VBE7 for Gen 1 GCN (Tahiti, Pitcairn and Cape Verde and their refreshes)

Download:http://www.techpowerup.com/forums/threads/vbe7-vbios-editor-for-radeon-hd-7000-series-cards.189089/

-HawaiiReader for Gen 2 GCN (Hawaii and Bonaire?)

Download:https://github.com/OneB1t/HawaiiBiosReader

From the thread:https://www.overclock.net/t/1561372/hawaii-bios-editing-290-290x-295×2-390-390x

-Hex editor for Gen 3 GCN (Will not be covered in this guide)

-GPU-Z

Download:https://www.techpowerup.com/downloads/2627/techpowerup-gpu-z-v0-8-7/

-Overclocking software

My recommendation:http://www.guru3d.com/files-details/msi-afterburner-beta-download.html

-Flashing program

https://www.techpowerup.com/downloads/2531/atiflash-2-71/ for the lazy people and https://www.techpowerup.com/downloads/2306/atiflash-4-17/ for USB users

I recommend following this guide for the ATIFLASH :https://www.techinferno.com/index.php?/forums/topic/1331-guide-amd-vbios-flashing/

For GCN 2-5 RECOMMENDED

-OverdrivenTool for Hawaii to Vega

Download:https://forums.guru3d.com/threads/overdriventool-tool-for-amd-gpus.416116/

In 2016, AMD introduced Wattman into the drivers. Since then AMD has improved on Wattman allowing much greater control for the end user. While the benefit does exist that it is possible to set individual profiles for every game, Wattman is not as practical as 3rd party tools. This is due to issues such as profiles not saving and not applying when the computer has started up. Due to this Wattman is not recommended.

Note:

A GPU with unlocked voltage control is necessary. This may not be possible for Gigabyte card’s as they have been locking down there voltages on a good portion of their AMD cards. An example would be the R9 280X Windforce.

Like overclocking when undervolting you are going to want a suitable components to work with. It is recommended you have a solid PSU that can provide consistent and clean power. A solid power supply refers to the build quality being competent when put under load. Recommend checking reviews done on johnyguru, techpowerup and tomshw.de. A poorly designed power supply can be similar to having a ticking time bomb for the rest of the components.

Voltage Curve

Below I have provided a Core Freq Vs Voltage Graph. This one in particular applies to Hawaii based chips and should not be used to for first gen GCN products or any products after due to changes in the node and architecture. This graph gives an idea at what voltage would be stable at any specific frequency especially when creating a power table. The graph with a ±30mV should be where actual minimum voltage be at any desired frequency when taking account manufacturing variances. This graph was made testing a ~70% percentile ASIC chip.

Peak Efficiency of the GCN 1/2/3 Architecture

It has been known since the GCN introduction, that GCN up to Fiji is most efficient from the range between 800-900mhz on the core. The 7970 launched with a core speed of 925mhz. This is the area to maximize MHz/watt.

Some More Data and Reasons

I have provided some measurements that I obtained from GPU-Z. The data is only as accurate as the sensors and software i used. These values were obtained for running Hitman:Absolution maxed out at 1080P for 10minutes. The GPU used is the MSI R9 390X. As we can see from the data the when I give up 20mhz and drop a solid 100mV the average power in drops by 50W and the max power in drops 60W. With less load variation the power supply does not need to work as hard. The temps drop slightly (as I used the default fan curve here) but the fan speed drops from 44% to 32%. This leaves a strong drop in noise making the card that much more quieter.

My test system at time of testing is as follows (Everything that is powered):

4770K @ 4.4Ghz 2.010VRIN 1.315V Core

32GB of GSkill Sniper 2400mhz 1.65V

Asus Z87-A

MSI GAMING OC R9 390X

Asus Xonar DS

512GB BX100

128GB M550

3TB WD Green 5200RPM

1TB Seagate 7200RPM

AX760

2 NF-F12

2 NF-A15

2 NF-A14 PWM

2 NF-A14 FLX

Mionix Naos 7000

MSI CK Black

Testing Procedure:

The game Far Cry 4 for two reasons. It is consistent in its load as staring at a fire inside the safehouse at the Royal Guard Kennels does not introduce random load variables to affect power draw. It is also very heavy on the CPU and GPU providing high usage on both ends.

Settings will be maxed out at 1440P with SMAA. INI edits were done by such as alpha to coverage quality.

The results of testing with power efficiency (PE) on and off are below.

As we see from here we can get quite excellent power consumption drop through undervolting.

The Power Efficiency Option

Based off the data from above it appears that the option doesn’t really do much. The option most likely works as it increases the polling rate at which powertune changes the voltages. Ideally this would mean the card would be able to downclock more quickly if there are instances idling to reduce the power consumption. I base this off the fact that adding “PP_AllGraphicLevel_DownHyst” to regedit at value to stopped any downclocking in crimson. That said PP most likely refers to powerplay and allgraphiclevel refers to the DPM states. With the downhyst referring to hysteresis the setting would affect how often the powertune checks to see if it should downclock to reduce power consumption. This option does not exist for Polaris onward and may have been removed some time ago.

Undervolting Auxiliary Voltage and its Effects

After dropping it by a whopping 131mV i have found that the power consumption does not change at all. This was done at a BIOS level. However when done on the software side such as MSI-AB, I noticed a drop of 7-8W. Not bad for -100mV. That said, don’t bother undervolting auxiliary because there are major stability issues if it is too low. Due to that it is can be considered not worth it due to the risks.

For Vega cards this option should be referred to as HBM voltage. This does not actually change the HBM’s voltage as those are hard set in the bios at 1.35V for Vega 64 and 1.2V for Vega 56. Functionally they do the same with the exception that “HBM voltage” will limit how low the core voltage may go. The core voltage for Vega may only go as low as what is set for “HBM voltage.”

On to the Actual Undervolting!

Requirements:

-A stressing app and time

It is advisable against using “apply overclock at startup” while stability testing. If the undervolt is unstable this can lead to the a constant boot cycle unless booted into safe mode as afterburner would no longer launch and adjustment can be made from there.

Like overclocking you will want to be looking for artifacts that occur. An artifact is very apparent when it occurs as the squares will appear completely out of place. Also be aware of the driver freezing/crashing/resetting. These symptoms occur due to instability, most likely due to too high frequency on the memory for GCN 1-4 and too low voltage for GCN 5(Vega) as Vega has ECC built into HBM2. The error-correcting code (ECC) in HBM2 makes it very difficult to see artifacts as they are corrected by ECC. This also makes testing for Vega more difficult. So, using your overclocking app you will want to follow this order:Drop the voltage->Check for instability->If fail decrease core frequency, if pass decrease the voltage again. This is a rinse and repeat process. I recommend dropping the voltage by 6.25mV intervals and the core by 10 or 5 MHz. The voltage controller AMD uses goes in 6.25mV intervals since Hawaii and presumably onward post GCN and is also used for the Zen motherboards.

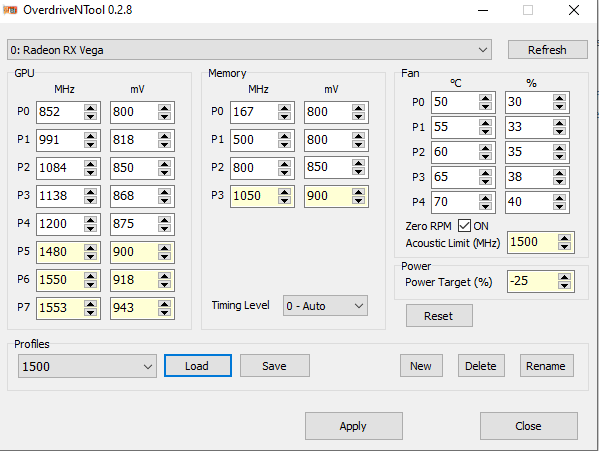

Vega In Particular

With GCN 5, AMD has changed how powertune in particular works. Vega now takes into account several variables include: die temperature, fan speed, power consumption, voltage and GPU load. Before with the first 4 generations of GCN the core clock set was the core clock put out. With Vega this is not longer the case.

In Figure 4 the P7 state is set to 1553 MHz at 943mV. In Battlefield 1 the actual core clock ranges from 1505-1480 MHz with the “GPU Power” draw going from 160-180W respectively. The frequency also changes based on the load of what is occurring at that instance. The voltage fluctuates between 0.912-0.900V post Vdroop. It has been set to hard cap at 180W through the -25% power target hence the frequency throttling. When not throttling the core frequency is around 1500 ± 5 MHz. This is most case scenario. This is one of the disconnects with Vega, as what is set is not what is outputted and needs to be taken into consideration when tuning Vega. Due to this increasing the frequency by 10 MHz may not create a 10MHz increase in the actual core clock. As the GPU under load will be swapping between P7 and P6 are the main focus. Setting P5 and below should just be reasonable. If the load is too low like when playing a game like League of Legends then the stability of P5 and below may need to be tested. This is the one benefit of Wattman. It is possible in Wattman to select individual states by right clicking the state and set them as maximum either minimum state. Using this it is possible to test each state individually.

Notes:

When undervolting second gen GCN cards (Hawaii) you might find some stability by also dropping the memory clock as Hawaii has the memory tied to the core voltage.

Also if you set the voltage too low you may find instability on your 2D clocks. This is because second and third gen GCN cards use offset rather than a set voltage and when you have it applied it will affect all states. For example idle voltage is on the 390X is 0.9V. However if applying a -100mV offset it will hit 0.8V which may cause it to become unstable at desktop. There is a workaround called “Bios editing.” I do not recommend bios editing as the same can now be done with OverdriveNtool.

Using OverdriveNtool

OverdriveNtool is the most versatile tool made by a third party for AMD GPU’s. It allows for multiple profiles to be saved as well as saving the profile to the registry. This creates the capability to have 1 profile to be at 1500 MHz another at 1650 MHz, all up to the end user.

When running OverdriveNtool in administrator mode the PPtable editor can be accessed by right clicking the app. This opens the “SoftPowerPlay Table Editor” which allow saving of the settings in the registry. This avoids any necessity of modding the bios to save the settings. Bios modding is not possible with Vega GPU’s.

Bios Editing

Disclaimer:

I do not take any responsibility for any mistakes you make. When you do a bios edit you accept full responsibility on the chance you may make a mistake and brick your GPU. Also credit to gupstergs thread for making this part possible.

This only really applies to second and third gen GCN as you can have programs such as MSI AB apply your undervolt at startup for your first gen GCN card.

Once you find your desired frequency at X voltage I recommend you set it in bios for second and third gen GCN cards as this will allow a workaround for the offset. I will only be covering second gen here.

HawaiiReader

Exporting your stock bios is done VIA GPU-Z. This is done by clicking the arrow beside the “bios version” and saving it. This will be the bios you will be working with. Opening HawaiiReader you will do the following:

1. Open and direct file to the bios

2. Save the bios as something else. This way you will have your stock bios and X bios in case something goes wrong and you need to reflash your stock bios on.

3. With your X bios you will not want to set your desired freq @ voltage. This is done going to the powerplay tab and inputting your voltage at under the “vol” heading and DPM 7 column. The value you put here MUST be the same as the value placed under every other table. Meaning DPM7 voltage and value must be the same in the following tables: GPU freq table, MEM freq table, StartVCELimitTable, StartACPLimitTable, StartSAMULimitTable and StartUVDLimitTable. The latter four are found under the limit table heading.

Eg. I left the frequency the same (1080mhz) but dropped the voltage by a solid 100mV. Using Aida64 I right click bring up a list then->Video Debug->ATI GPU registers. Here it gives the “GPU Pstates List” where the DPM7’s VID =1.3XXXXV. From there I took off 0.1V and set to 1.2XXX volt in the bios. The exact value put in can be found in gupstergs hawaii bios editing thread linked at the beginning of the thread.

4. Save your edits and then flash your new bios.

Beyond that -100mV

Say you are like me and want to maximize frequency while using the ABSOLUTE minimum voltage. There are inherent problems to this especially once you go below 1000mhz. For me I found that 965mhz core and 1250mhz memory was the best in regards to performance/watt. This was done with -50mV on top of the -100mV from the bios.

There are issues that crop up at low frequencies and voltages and it has to do with how powertune works. Powertune swaps between DPM states with extrapolation in between. When you hit too low voltages (like I have with the addition -50mV ) you will have several states sharing the exact same voltage while differing in frequencies.

[ GPU PStates List ]

DPM0: GPUClock = 300 MHz, VID = 0.92402 V

DPM1: GPUClock = 533 MHz, VID = 0.97536 V

DPM2: GPUClock = 709 MHz, VID = 1.02669 V

DPM3: GPUClock = 818 MHz, VID = 1.05852 V

DPM4: GPUClock = 864 MHz, VID = 1.05852 V

DPM5: GPUClock = 904 MHz, VID = 1.05852 V

DPM6: GPUClock = 936 MHz, VID = 1.10986 V

DPM7: GPUClock = 965 MHz, VID = 1.15503 V

Notice how DPM3-5 share the same voltage while having different frequencies. If the load is too low you can have crashes happening due to how powertune acts in this region. This particularly happens when you use the function FRTC and the frequency fluctuates. The work around this is to actually use a higher frequency and voltage. Mine is 1060mhz and -19mhz as it creates a larger range between the states as seen here:

DPM0: GPUClock = 300 MHz, VID = 0.92402 V

DPM1: GPUClock = 533 MHz, VID = 0.97536 V

DPM2: GPUClock = 780 MHz, VID = 1.02669 V

DPM3: GPUClock = 900 MHz, VID = 1.05852 V

DPM4: GPUClock = 950 MHz, VID = 1.10986 V

DPM5: GPUClock = 995 MHz, VID = 1.15503 V

DPM6: GPUClock = 1030 MHz, VID = 1.18686 V

DPM7: GPUClock = 1060 MHz, VID = 1.20637 V

With no overlapping voltages at different states there are no issues related to powertune and FRTC. This can also be done by OverdriveNtool.

Dangers of undervolting

There are no inherent risks to undervolting unless you mess up. Then its your own fault. In theory the use of lower voltage should increase the lifespan of your GPU as less heat is being pumped through your transistors. As documented in the Tom’s Hardware review from the very start of the post lower voltages means lower temps. Lower temps means that fans spin slower. With a slower spinning fan there is less heat transfer between the air and the heat sink. This can cause temps to increase. The PCB will also be hotter due to slower spinning fans. The work around this is to use a more aggressive fan curve to increase the heat transfer.